Gen AI

Unlock the Value of AI Securely Throughout Your Enterprise

Gen AI has changed the game, bringing people closer than ever to the cutting edge… but also closer to data leaks

The pROBLEM

Harnessing Generative AI means governing it

Zenity helps avoid the common security and compliance risks stemming from Gen Al development

Lack of Visibility

Without proper security, it is nearly impossible to keep track of how people interact with enterprise Copilots and build their own apps

Insecure by Design

With no SDLC to catch mistakes, less technical business users are more prone to integrating insecure plugins to enterprise Copilots, create risky apps, and automations

Excessive Privileges and Access

Due to default settings and popular misconfigurations, devs are likely to grant apps and automations with more access and privileges than needed, resulting in data leaks

Lack of Control

When lacking visibility and control, many security leaders simply opt to block the use of AI tools for business users, stunting innovation

The solution

AI changes everything. Except the need for security

Zenity’s platform provides full visibility and control for Enterprise AI Copilots, allowing our customers’ businesses to flourish

Maintain Visibility

Establish control and awareness of all user built bots, copilots, and plugins throughout the AI landscape

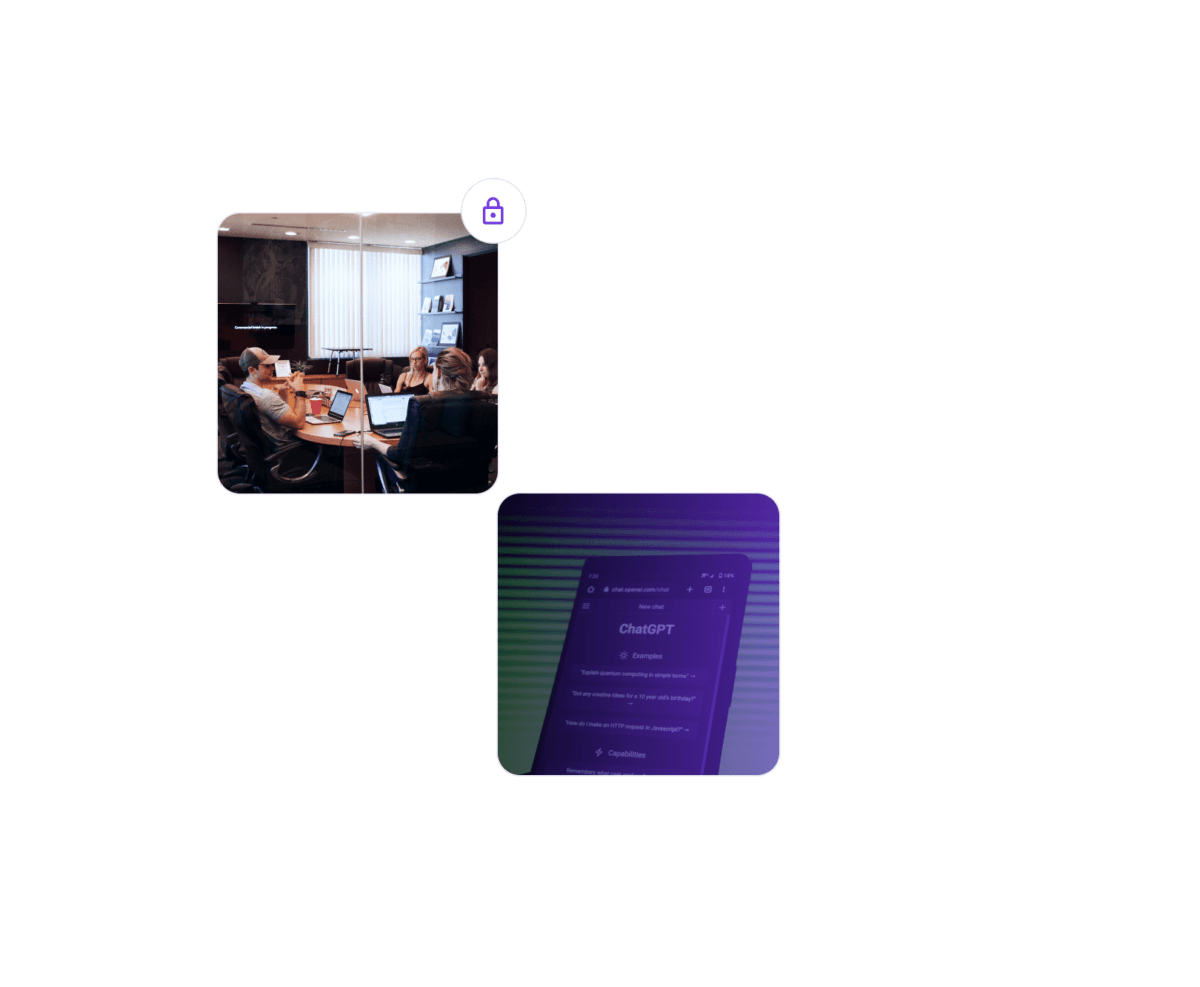

Assess and Manage Risk

Detect vulnerabilities like over-shared copilots, hard-coded secrets, and vulnerable plugins

Real-Time Governance

Implement guardrails for secure use of Generative AI via playbooks and customizable policies

Secure AI Throughout the Enterprise

As users of all technical backgrounds lean on Al to get more done, Zenity makes sure they are secure

Continous Discovery

Detect all plugins that are used across Microsoft 365 Copilot as well as any app created with or using AI

Confidently Unleash AI

Too many organizations have prevented or limited the use of AI due to security concerns. No longer!

Minimize Risk

Prevent data leakage by identifying vulnerabilities in enterprise and custom AI copilots

Govern AI Responsibly

Implement guardrails to ensure that as business users are building and interacting with AI, that data stays secure

Making Sense of AI in Cybersecurity

Want to learn more?

We’d love to hear from you and talk about all the latest updates in the world of low-code, no-code, and Al led development